Model interpretability techniques help you understand why a machine learning model makes certain predictions, especially when the system feels complex or unclear. These methods give you clearer insight into hidden logic, improve trust, and support explainable AI in high-risk industries.

When you apply strong model interpretability approaches, you can uncover feature patterns, detect unusual behavior, and correct errors before they affect real people. These techniques also strengthen AI transparency, helping teams verify fairness, stability, and performance.

As machine learning grows more advanced, businesses need reliable tools that reveal how models think. With the right strategy, interpretable machine learning becomes safer and more effective.

Why Model Interpretability Matters in Real-World AI Systems

Interpretability matters because real systems need trust, safety, and clarity. Doctors want to know why medical diagnosis models recommend a treatment. Banks must explain why a credit scoring model rejects a loan. These demands push teams to use explainable AI for stability and confidence.

Regulators also require transparency. Laws such as GDPR include the GDPR right to explanation, which forces organizations to justify automated decisions. Clear understanding also helps you detect errors, reduce hidden bias, and improve algorithmic fairness across high-stakes industries like AI in finance or AI in healthcare.

Interpretable Models vs. Black-Box Models

Interpretable models, often called white-box models, reveal how features shape predictions. You can easily track patterns in decision trees, linear regression models, or logistic regression because their logic stays visible. This makes it simple to explain results to non-technical users.

Black-box models hide their reasoning inside deep layers. Systems like neural networks, deep learning models, and transformers produce strong accuracy but hide key signals. Teams using them face black box AI challenges because explaining outcomes becomes harder. That’s why they use post-hoc interpretability tools to uncover hidden steps.

Types of Model Interpretability: Intrinsic vs. Post-Hoc

Intrinsic interpretability refers to models designed for clarity. Their structure naturally supports model transparency, so you can examine each part without extra tools. This clarity helps teams working in safety-critical fields needing honest insight.

Post-hoc interpretability works after the model is trained. Developers use saliency maps, SHAP values, or local surrogate models to uncover hidden logic behind predictions. These tools show patterns even when the model stays complex. Many teams rely on post-hoc strategies when accuracy demands stronger models.

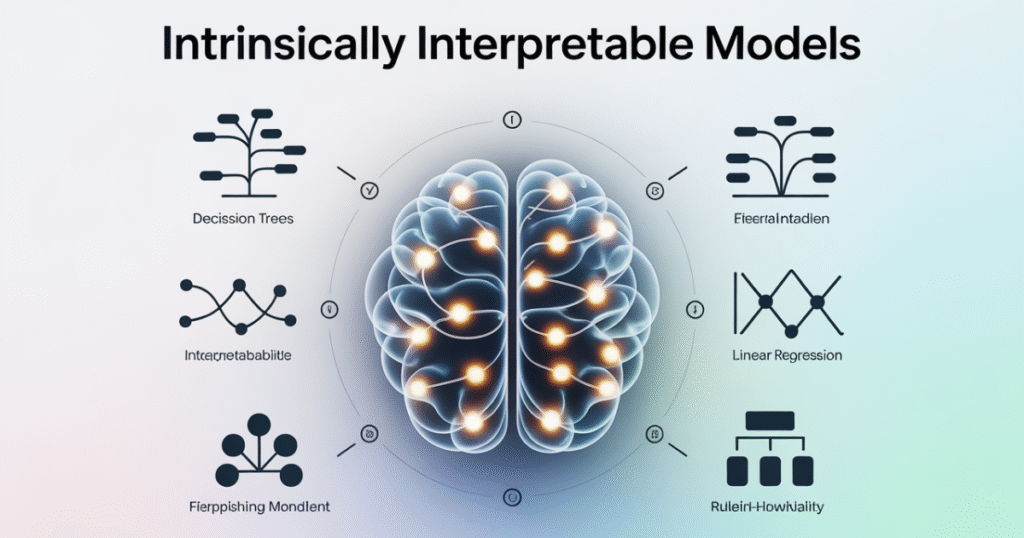

Intrinsically Interpretable Models

Intrinsic models offer transparency by default. Systems like decision trees highlight feature splits, while linear regression models show how each variable influences the target. Many teams use them when they need model explainability methods that stay simple.

These models support clarity for risk-sensitive tasks. A healthcare team may choose a transparent model to justify results. A legal team may prefer white-box models to support audit trails and ensure fairness. Their built-in clarity reduces debugging time and strengthens reliability.

Post-Hoc Model Interpretability Techniques

Post-hoc methods reveal hidden logic after a model is built. Popular tools include SHAP values, LIME interpretability, and permutation feature importance. Each tool tries to create human-understandable explanations from deeply layered systems.

Developers use these techniques to inspect complex model interpretation without sacrificing accuracy. Post-hoc tools highlight key signals, show sensitivity to features, and expose where bias forms. This clarity helps teams interpret outputs even when the model stays opaque.

Global Model-Agnostic Interpretation Methods

Global methods explain overall model behavior. Tools like partial dependence plots reveal how every value affects predictions. PDP visualization helps teams see broad relationships instead of single examples.

Some teams use permutation feature importance to determine which inputs shape predictions most. These global tools support insights across the entire dataset. They are common in industries requiring complete risk prediction transparency.

Local Model-Agnostic Interpretation Methods

Local methods study single predictions. Tools such as LIME interpretability highlight which features shaped one specific outcome. This helps you analyze situations where explaining individual results matters.

Counterfactual explanations also show how small changes could alter outcomes. Teams apply these methods in fields like loan approval model explanations, where a single decision affects real people. Local clarity builds trust and reduces frustration.

Key Interpretability Methods Explained

Interpretability tools support visibility inside complex models. SHAP values offer fair, game-theory-based insights showing how each feature contributes. LIME interpretability gives quick approximations using local surrogate logic.

Other tools like saliency maps, gradient-based explanations, and activation maximization help interpret deep layers inside neural networks. These methods reveal hidden influences, highlight activated neurons, and track internal learning patterns.

Model-Specific Interpretability Tools

Model-specific tools target particular architectures. Attention mechanisms reveal weight patterns inside transformers, while layer-wise relevance propagation (LRP) highlights important regions in vision models.

Teams studying deep learning models often use neuron activation patterns to interpret inner behaviors. These methods help developers debug unusual predictions and confirm that internal signals align with human reasoning.

How to Choose the Right Interpretability Method

Choosing the right method depends on your goals. If you need full transparency, an intrinsic model works best. If you want accuracy but still need clarity, a post-hoc tool such as SHAP offers deeper insight.

Large institutions choose based on compliance needs. Finance teams may need local clarity for customers. Healthcare teams may focus on stability and ethical practice. Matching your method to your industry ensures strong outcomes.

Also Read This Blog: Adaptive Network Control

Model Interpretability Evaluation Techniques

Evaluation tests show how well your interpretability tools work. Developers check stability by repeating methods across samples. They examine consistency to see if important features remain the same.

They also study evaluation of model interpretability by comparing human understanding. If humans cannot grasp the explanation, the tool fails. These checks support safe deployment and reduce ambiguity in high-risk systems.

Practical Applications of Explainable AI (XAI)

Explainable AI powers many industries. Hospitals use XAI to verify diagnostic suggestions and protect patients. Banks rely on fraud detection explanation to catch scams. Insurers adopt risk assessment algorithms to keep decisions fair.

Courts also check recidivism prediction models for bias. Automakers use autonomous vehicle decision systems to track emergency responses. XAI ensures decisions stay safe, reliable, and fair across all these sectors.

Challenges & Limitations of Model Interpretability

Interpretability faces many challenges. Some models remain too complex for full clarity. Teams must balance interpretability vs accuracy trade-off when choosing an approach.

Bias also hides deep inside models. This creates subjectivity in interpretability because different tools offer different results. Teams must check outputs carefully to avoid misleading conclusions.

Also Read This Blog: Certified Industrial Accountant (CIA)

Best Practices for Implementing Explainable AI

Teams should start with transparency goals. They must choose models that match risk levels, regulatory needs, and customer expectations. This ensures accountability across the entire workflow.

Developers also test explanations with real users to see if they make sense. This supports reliable algorithm accountability and strengthens trust. When users understand a decision, they accept it more easily.

Future of Model Interpretability & Explainability

The future of interpretability moves toward unified tools. New research explores deeper visibility inside deep learning models using hybrid approaches. These systems combine global and local explanations for better clarity.

AI oversight will grow stronger as governments push new regulations. Industries will adopt stronger tools to meet legal expectations. This future strengthens fairness, safety, and trust.

FAQs

What are interpretability techniques?

Interpretability techniques explain how a machine learning model makes predictions, helping you understand the factors influencing its decisions.

What is model interpretability?

Model interpretability is the ability to understand, trust, and explain a model’s behavior and prediction process.

What are the main 3 types of ML models?

The three main types are supervised learning models, unsupervised learning models, and reinforcement learning models.

What are the LLM interpretability techniques?

LLM interpretability techniques include attention analysis, activation visualization, prompt probing, and embedding interpretation.

Is 70% model accuracy good?

70% accuracy is acceptable for some tasks, but its value depends entirely on the problem’s complexity and risk level.

Pingback: Crypto30x.com Guide: Features, Safety, Zeus & Investment Risks - BBC Insider